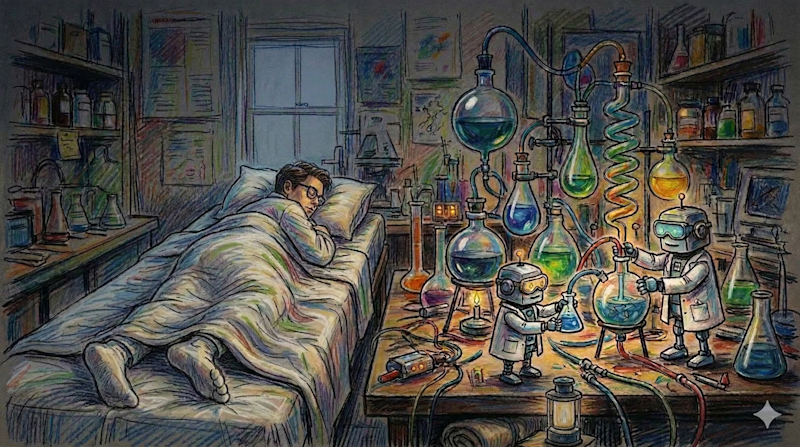

Andrej Karpathy, the former Tesla AI lead and OpenAI co-founder, has released a groundbreaking open-source project called 'autoresearch' that fundamentally changes how artificial intelligence research is conducted. This simple 630-line script, available on GitHub under an MIT licence, automates the scientific method using AI agents that work autonomously whilst humans sleep. The system creates an optimization loop where AI agents read their own source code, form hypotheses for improvement, modify the code, run experiments, and evaluate results—all without human intervention.

The autoresearch agent operates on a fixed compute budget, typically five minutes on a GPU. It measures success through validation loss improvements, keeping beneficial changes and reverting unsuccessful ones. In Karpathy's initial overnight run, the agent completed 126 experiments, reducing loss from 0.9979 to 0.9697. After running for two days, it processed approximately 700 autonomous changes, discovering roughly 20 additive improvements that transferred to larger models. This resulted in an 11 per cent efficiency gain, dropping the 'Time to GPT-2' metric from 2.02 hours to 1.80 hours on what Karpathy already considered a well-tuned project.

The implications extend far beyond computer science. Varun Mathur, CEO of Hyperspace AI, distributed the concept across a peer-to-peer network where 35 autonomous agents ran 333 experiments in a single night. These agents demonstrated remarkable emergent behaviour: CPU-only agents on laptops focused on clever initialization strategies whilst H100 GPUs used brute force approaches. Using gossip protocols, agents shared discoveries in real-time, with successful strategies spreading rapidly through the network. In just 17 hours, these agents independently rediscovered machine learning milestones that took human researchers at Google Brain and OpenAI nearly eight years to formalise.

The business world quickly recognised the revolutionary potential. Eric Siu, founder of Single Grain, applied autoresearch to marketing, arguing that whilst most marketing teams run 20 to 30 experiments annually, the next generation will run over 36,500 experiments per year. By replacing training scripts with marketing assets—landing pages, ad creatives, or email campaigns—agents can continuously test variables, measure response rates, and optimise performance autonomously. This creates a proprietary knowledge base that becomes a competitive advantage built on experimental history rather than human insight alone.

However, the GitHub community has raised important concerns. Researchers worry about over-optimisation, where parameters become tuned to validation set quirks rather than general performance. Questions remain about whether the gains are truly substantial or merely statistical artefacts. Despite these concerns, the shift is clear: human researchers are transitioning from experimenters to experimental designers, defining the constraints whilst AI agents execute the tedious work of iteration. As Karpathy noted, agents caught oversights in attention scaling and regularisation that he had missed manually over two decades of work. The bottleneck of AI progress is no longer human coding ability—it's our capacity to define meaningful search parameters for these autonomous systems.

Fuente Original: https://venturebeat.com/technology/andrej-karpathys-new-open-source-autoresearch-lets-you-run-hundreds-of-ai

Artículos relacionados de LaRebelión:

- AI Agents Need Processes Are Your Operations Ready

- Government Spy Tech Buys Now Secreted Away

- KiloClaw Deploy AI Agents in 60 Seconds

- Indias Toxic Air Crisis Demands Action Now

- AI Agents Peter Steinberger Joins OpenAI

Artículo generado mediante LaRebelionBOT

No hay comentarios:

Publicar un comentario